- What’s Aura Frames?

- Disclosures

- What causes the sharp increase in traffic?

- Scaling over the years

- Postgres Scaling Challenges and Solutions

- Christmas 2024 Retrospective

- Postgres Christmas 2025

- Workload-driven “Whole table sharding”

- Postgres and Infra Metrics Christmas 2025

- Switchover to New DBs

- Reflecting back on the plan

- Thank You and Looking Forward

On Christmas Day 2024, Postgres infrastructure powering the Aura Frames API had problems under peak load, being unavailable for three hours and disrupting the experience for new customers. With Christmas being the biggest day of the year, the team knew it would need improvements for 2025 and beyond.

One year later, much of the resource intensive data access was reworked, the Postgres infrastructure was upsized, and this approach not only survived, but thrived, providing reliable service through the holiday season.

Total Queries per second (QPS) peaked at 225,000 (226K TPS), with 100K QPS sustained over 10 hours for multiple days, and an average response time of 25 microseconds.

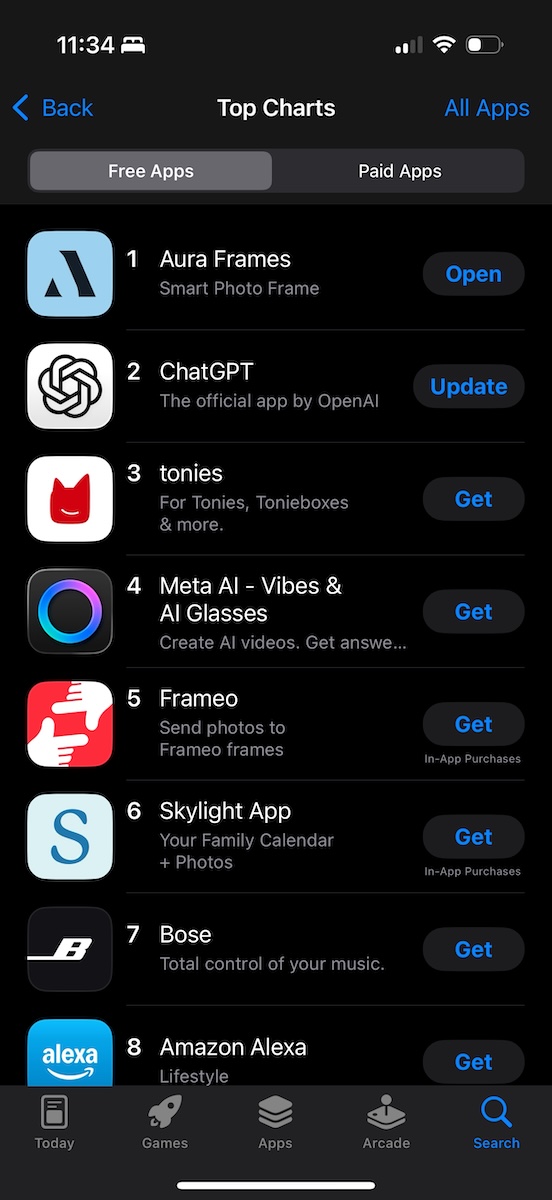

The improved reliability meant customers could smoothly set up new frames and add photos, and they did it more than ever, with the Aura Frames app reaching #1 in U.S. and Canadian Apple and Android App Stores on Christmas Day.

In this post we’ll look behind the scenes at months of engineering planning and execution that went into achieving that!

A follow-up post will dig into the Ruby on Rails side, while this one will focus on Postgres. I hope you'll be back for part 2!

What’s Aura Frames?

Aura Frames (Aura Home, Inc.) is the company behind modern, high-quality, Wi-Fi connected digital photo frames that customers love.

The frames are easy to use via free iOS and Android apps, don’t require a subscription, and offer unlimited cloud storage for photos and videos. Once set up, family members can be invited to contribute photos and videos via the app from anywhere. Typically Aura frames have an average of 4 contributors adding content.

In 2025, more than 1 billion photos were shared to Aura frames globally.

While public engineering blog posts are limited, Aura was featured on the AWS Storage Blog in the past. Link: How Aura improves database performance using Amazon S3 Express One Zone for caching.

Disclosures

I began working with Aura in 2025. Aura does not have a public engineering blog, so we discussed writing a post here, where I regularly write about Postgres, Ruby on Rails, and scaling databases.

This post was written by me and I do not speak for the company. The company had the opportunity to review and make minor edits before publication.

The Christmas Day outage was a painful reality of scaling fast, and I appreciate Aura’s willingness to discuss it here.

I’m biased, but from my view the company is dedicated to continually improving the customer experience, in part with strategic investments in technical infrastructure to achieve higher levels of reliability.

With that covered, let’s take a look at how the frames are used and what drives the traffic.

What causes the sharp increase in traffic?

On Christmas Day, millions of customers set up hundreds of thousands of new Aura frames. The backend platform needs to work well for both existing customers and handle the load from new customer activity. For new customers it’s especially critical they have a good experience from their first moments with the product.

While the holiday timing is predictable, the rate of new frames and new photos added each year increases, adding a new amount of pressure to infrastructure components. Postgres is not easily horizontally scalable, and is costly to operate.

The average amount of increased peak TPS on Christmas Day was around ~4.5x, with the biggest increase on a DB being ~18x the normal value! To meet this demand, advanced capacity and financial planning were necessary, along with provisioning resources ahead of time and shrinking them back down afterwards.

Scaling over the years

The team has executed a variety of scaling tactics over the last half decade by employees and in conjunction with Postgres consultants. Scaling efforts often focused on reducing pressure on Postgres within the constraints of a single primary instance, while preserving its operational simplicity. (Squeeze the hell out of the system you have).

Scaling is more straightforward on the stateless, HTTP side. Aura uses AWS and has leveraged Auto Scaling Groups (ASGs), which can scale up to thousands of EC2 instances running the web application stack, image processing, PgBouncer, and other services.

For Postgres, vertical scaling was leveraged as long as possible.

Here’s a look at the primary database instance at peak for Christmas Day 2024. Note that this is the current largest available instance for RDS.

| Postgres Version | RDS Instance Class | vCPU | Memory (GiB) | Storage Type | DLV |

|---|---|---|---|---|---|

| 14.x | db.r6g.48xlarge | 192 | 1536 | io2 | ⚠️ |

Without a larger instance class to move to, the team no longer had vertical scaling as an option for improved reliability for Christmas 2025 and beyond.

⚠️ For Christmas 2024, the team had a Dedicated Log Volume (DLV) in place for replication from the main instance. A DLV reduces latency and improves reliability for replication.

Quoting from AWS Docs on Using a Dedicated Log Volume (DLV):

A DLV moves PostgreSQL database transaction logs … to a storage volume that’s separate from the volume containing the database tables.

While replication lag is “normal” (asynchronous streaming replication) and varies due to write pressure, vacuum activity, and more, performance on the primary had not previously been affected by replication lag before.

Unfortunately that changed during peak load on Christmas 2024. More details are below in the Christmas 2024 Retrospective section.

Before diving into that, let’s briefly cover some of the generic challenges of reaching the scalability limits of a single instance.

Postgres Scaling Challenges and Solutions

The use of Postgres by Aura faces all kinds of common Postgres scaling challenges.

- Insert latency. To help reduce latency, foreign key constraints are not used. Indexes on high write tables are minimized to critical ones. Indexes are periodically rebuilt (

reindex concurrently). - Replication. Need read-after-write and can have high replication lag, read replicas have historically not been used for read queries. Read queries run on the primary. Fast storage is used (

io2) and a DLV. - Buffer cache and high cache hit rates. It’s critical to have as much memory as possible for buffer cache to achieve sub-millisecond query executions. In-memory access reduces storage device access and IOPS.

- IOPS spikes that exceed Provisioned IOPS. It’s critical to avoid exceeding allocated PIOPS, otherwise queuing and high latency occurs.

- The team faced CPU spikes in the single primary configuration, during vacuum, reproduced in load testing. The CPU spike caused knock on high query latency.

- During the peak load period, tens of thousands of client connections are established.

- The application has per-user counts that constantly change. For example, could social media-style likes, comments, activity feeds, and more. These counts are stored in Memcached when possible, with connections managed by HAProxy.

- Disruptive Autovacuum vacuums during busy periods. To minimize disruption, Autovacuum is throttled to run slower (

autovacuum_vacuum_cost_limit,autovacuum_vacuum_cost_delay). Tables with heavy dead tuple growth are vacuumed manually in a low activity period overnight, not by Autovacuum. - Index bloat. The database uses a primary key data type that isn’t 100% ideal for minimizing bloat. Index bloat occurs meaning indexes occupy more space and aren’t as efficient to scan. To solve that, indexes are periodically rebuilt, but rebuilding adds a lot of IOPS pressure so the timing needs coordination.

- Configuration complexity. Postgres parameters (GUCs) are modified beyond what RDS provides very strategically, with the exception of Autovacuum parameters as Vacuum is regularly being monitored and adjustments made to control IO spikes.

- Background work state, queue style data. The team had previously created a separate Postgres database used to manage background jobs.

Christmas 2024 Retrospective

Unfortunately on Christmas Day 2024, the team, platform, and customers faced a significant outage. A root cause analysis revealed that the main contributor was running out of space on the DLV, due to the growth of write ahead logs (WAL) filling it up.

The team had provisioned a Dedicated Log Volume (DLV) offering lower latency as a dedicated volume for WAL log storage.

The team traced the root cause back to a change introduced in Postgres 14.1 (the prior year ran Postgres 13.x which used S3 for WAL archival) related to the new use of replication slots for replication.

“We weren’t aware RDS had changed in-region replication to use replication slots by default in Postgres 14.1. This caused the amount of WAL stored on the primary to be unbounded when the replica lagged.”

(AWS source: RDS “Monitoring replication slots for your RDS for PostgreSQL DB instance”) “RDS for PostgreSQL 14.1 and higher versions use replication slots for in-Region read replicas.”

From my own research, the benefits of using replication slots for replication seemed to be speeding up replica “catch up” or full recovery if needed. Postgres docs say “replication slots retain only the number of segments known to be needed” (less overall content). (Replication Slots).

The trade-off off though can be severe, as there’s the possibility of unbounded slot growth for inactive (or heavy lagging consumption) slots, resulting in pg_wal filling the storage volume and causing Postgres to shut down.

This is documented in community Postgres under Disk Full Failure (Docs for Postgres 16.x) or under “No space left on device” ENOSPC on the wiki.

The server will crash and run crash recovery.

One way to limit the growth is to set max_slot_wal_keep_size (Postgres replication docs) (new in 13, default value is -1 which means disabled) but initially it’s not set. wal_keep_size, max_slot_wal_keep_size, and max_wal_size were all updated going forward. In a worst case, this should result in the replica becoming unusable, but not causing the primary to shut down.

Besides the replication issue, the team was also able to reproduce problematic high CPU usage under synthetic load testing during vacuum. A variety of theories for the high CPU use were analyzed over the summer of 2025, but ultimately none had very strong evidence. RDS Postgres limits access to the underlying host OS making it impossible to directly use Linux profiling tools like perf (as compared with something like running community Postgres on EC2). Despite that limitation, the team wanted to stick with RDS for its other benefits.

In exploring multi-instance designs, the team began creating proofs of concept for sharding Postgres from the application. Various approaches were explored, but the team heavily preferred mature solutions and a high degree of owner-operator control, thus a custom solution with Ruby on Rails framework capabilities had the lowest friction.

Ruby on Rails Horizontal Sharding was partially built out and could have been a viable solution, but ultimately was not chosen.

With more than half of 2025 gone, Christmas Day 2025 was looming and daunting. An additional constraint on solutions was what could be built and supported in time.

The clock was ticking!

Postgres Christmas 2025

The solution that seemed to fit the best would be a custom solution, rewriting a lot of the application query layer, taking direct control of key queries and making changes needed to distribute the tables.

To prepare, the top 10 tables by write operations and size were analyzed. All queries for those tables would need to be analyzed for incompatible elements that don’t work across a database boundary, like joins and some subqueries.

Ultimately the 10 tables were distributed to 7 new primary instances (making 8 in total), some DBs with as few as 1 table. All reads and writes continued to flow from the same Ruby on Rails codebase, not new microservices. To achieve that we’d use Active Record Multiple Databases support. That meant that each primary database would get the full accoutrement, including its own named config, the option of a read replica, and the ability to manage schema definition DDL changes (Rails “Migrations”). The production configuration would be mirrored in all lower environments so that the extensive unit test suite would run across all 8 databases. The only difference in developer environments was the 8 databases ran on one Docker Postgres instead of being on their own server instances.

With the plan in place, it was time to start coding! We got started in earnest around August 2025 with 3-4 months available to execute and validate the plan.

With each instance dedicated to one or a couple of tables, there was much more CPU, Memory, and IOPS available in total. This allowed each of the instances to be over provisioned temporarily before Christmas, adding headroom, availability, and reliability.

We determined the query workload for the biggest table by size, row count, call frequency and % of IO would still fit ok on a single big instance, without needing to shard the table rows.

Here’s what the instances were scaled up to for Christmas 2025.

| Postgres Version | RDS Instance Class | vCPU | Memory (GiB) | Storage Type | DLV |

|---|---|---|---|---|---|

| 17.6 | db.r6g.48xlarge | 192 | 1536 | io2 | ✅ |

| 17.6 | db.r6g.48xlarge | 192 | 1536 | io2 | ✅ |

| 17.6 | db.r6g.48xlarge | 192 | 1536 | io2 | ✅ |

| 17.6 | db.r6g.16xlarge | 64 | 512 | gp3 | |

| 17.6 | db.r6g.16xlarge | 64 | 512 | gp3 | |

| 17.6 | db.r6g.16xlarge | 64 | 512 | gp3 | |

| 17.6 | db.r6g.16xlarge | 64 | 512 | gp3 | |

| 17.6 | db.r6g.16xlarge | 64 | 512 | gp3 | |

| Totals | 832 vCPU (~4.3x ↗) | 6656 GiB (~4.3x ↗) |

This capacity powered Christmas well, but of course was expensive to operate. We’ll look at how we scaled down and reigned in costs after Christmas.

Workload-driven “Whole table sharding”

We ended up using the term “whole table sharding” and the tables picked tended to have the most writes, the most rows, and be the most challenging to vacuum quickly or rebuild indexes for.

We were able to gradually modify all the application queries and get everything rolled out where it was backwards compatible, then we could cut over.

To transition the row data, wanting to initially replicate it, we tried using AWS pglogical and logical replication directly but were not able to successfully replicate the data in a reasonable amount of time.

We may revisit that in the future, however we ultimately decided on physical replication which copied the whole instance, before cutting over to the new one. While more wasteful initially, we knew we could operate that approach reliably and with a minimal amount of downtime.

The major downside of this approach was that we had to repeat it 7 times, duplicating the entire database each time, consuming a ton of extra space temporarily.

We decided it would be ok to temporarily allow for the excess space consumption on the new instances, then scale the provisioned space back down after Christmas by using the AWS Blue/Green deployments. B/G Deployments made the process pretty smooth. Once we’d cleaned up all the unneeded tables, we provisioned the new (green) instance with less space, and cut over to it, discarding the old (blue) instance.

Postgres and Infra Metrics Christmas 2025

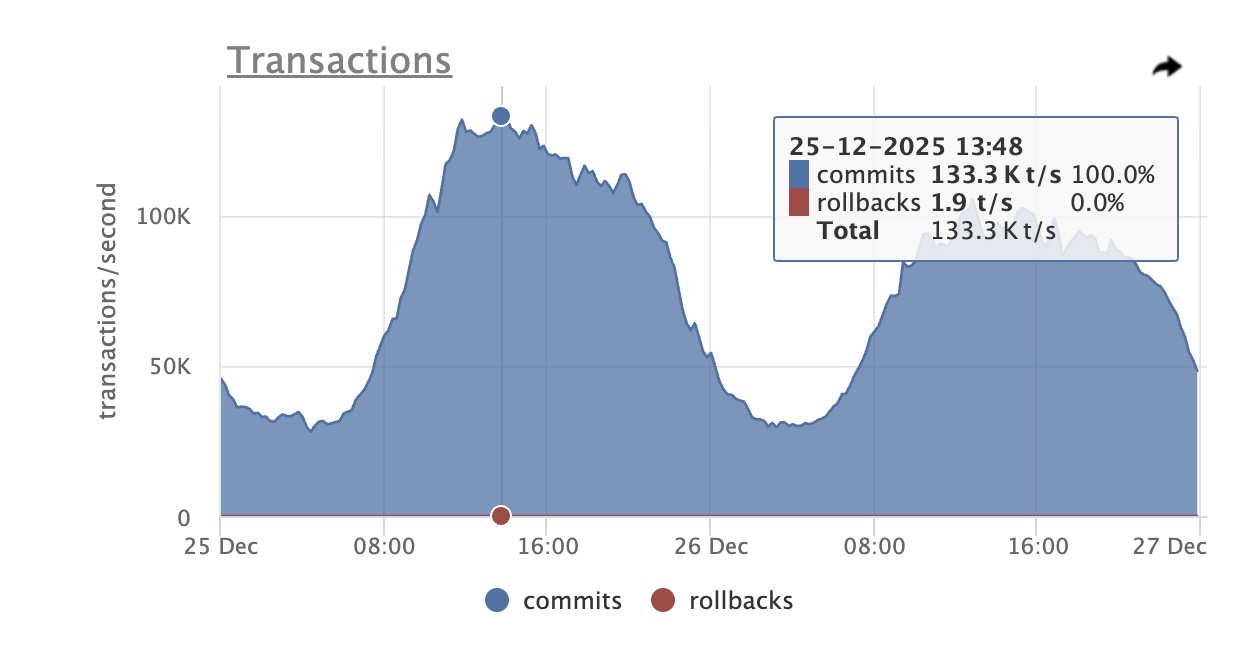

All Postgres instances were upgraded to 17.6 in the Fall of 2025. TPS and QPS measured by Odarix. PgBouncer, Memcached, HAProxy metrics from CloudWatch. Query and schema details from PgAnalyze.

Main DB 133K TPS Peak Christmas Day Odarix Screenshot

Main DB 133K TPS Peak Christmas Day Odarix Screenshot

| Metric | Normal | Christmas Day | Notes |

|---|---|---|---|

| Main DB TPS Peak | 40K | 133K (3.3x ↗) | |

| All DB TPS Peak Sum | 50K | 226K (4.5x ↗) | |

| Average query latency | 25 microseconds | ||

| Largest table | 7TB | ||

| Total space | 30TB | ||

| Largest row count | 7B (Billion) | ||

| PgBouncer Instances (Sum of all) | 73 | 230 (~3.1x ↗) | |

| PgBouncer Client Connections | 7.3K | ~40K (~5.5x ↗) | |

| Biggest Dead Tuple Growth | 8M | 80M (~10x ↗) | ~4 hrs. runtime |

| Memcached Instances | 21 | 36 (1.7x ↗) | |

| Memcached Connections | 9.3K | ~30K (3.2x ↗) |

Traffic grows in the week before Christmas, but on Christmas day (December 25) it really takes a sharp upward trajectory. The main sustained peak load period is over 10 hours on Christmas Day, with the main DB sustaining > 100K TPS from 10:00:00 to 20:00:00 US Central Time.

Employees noticed the free Aura Frames iOS and Android apps were moving up in the ranking charts. Excitement grew into the evening seeing the app move into the Top 10, all the way to the #1 position. 🎉 I grabbed the screenshot below at around 11:30 PM CT December 25.

Screenshot showing the Aura Frames app at the #1 rank in the U.S. Apple App Store

Switchover to New DBs

To actually switch over to new server instances, effectively relocating the tables, it was critical to not lose any write operations and to minimize user-facing downtime.

Initial setup steps:

- For the RDS primary database, create a new read replica. It will need as much allocated space as the original instance. Use physical replication.

- Set up AWS SSM parameters for the new database to be used by Ruby on Rails and PgBouncer.

- Create a new PgBouncer Auto Scaling Group (ASG) for the new DB. Set the SSM parameter to the network load balancer endpoint.

- Route application traffic through the new PgBouncer but have it continue to point at the original DB via an environment variable. Changing this param would be the sole change in the brief downtime period.

Switchover steps:

- Bring all PgBouncer instances down (set ASG desired capacity to 0). No writes are happening here. Down for users.

- Wait for replication lag to reach zero. Promote the read replica to be a primary instance and wait for it to restart. Now it’s ready for writes.

- Change the environment variable PgBouncer uses to point at the database, to now point at the newly promoted primary instance.

- Bring back PgBouncer instances, setting the ASG desired capacity back to the original value.

This process involved 5-10 minutes of user-facing downtime. We performed it in an off-peak time to make it less impactful.

Clean up:

- Drop moved table(s) from original primary (carefully review this with team members in advance). Initially rename first, double check again, then drop the renamed table.

- Drop all unneeded tables from the new replacement primary, which was most tables or all but one.

With all the tables cleaned up on the new primary, we now had way more allocated space than needed, and this provisioned space costs money. This used to be more of a pain, but AWS launched Blue/Green Deployments and we used it to help here.

We set up a Blue/Green Deployment where the Blue was the newly promoted primary, and the Green would be a replacement instance with less space provisioned. Once replication was caught up, we cut over to Green, and thus achieved an instance with an appropriate amount of provisioned space.

Reflecting back on the plan

Some of the key contributors to successfully delivering reliable Postgres:

- Having an extensive test suite running tests continuously (CI) catching regressions as code was refactored, along with PR reviews from long-tenured team members (invaluable)!

- As refactorings happened in large batches, slicing out smaller chunks as smaller PRs for easier review, and less risk as releases.

- Using a canary release process for widespread changes, released to a single instance vs. the whole fleet, helped to validate correctness for issues that were hard to verify outside of the production environment.

- Having an extensive pre-production load testing capability to validate the accumulated changes under high load, across most of the API surface area of the platform, drilling into identified performance regressions.

- Having a large AWS infrastructure budget 😅 to work with and strategic spending, in order to over-provision instance sizes and IOPS temporarily to gain more reliability, thanks in part to being a profitable company!

- Having comprehensive CloudWatch metrics, dashboards, web, and Postgres logs for analysis (AWS Athena), time-series metrics galore (formerly StatHat), and best-in-class Postgres observability (PgAnalyze), to empower backend engineers with data access layer visibility.

- Running recent versions of Postgres and Ruby on Rails, unlocking useful features for high scale operations

- Experienced, long-tenured colleagues helping guide changes, focusing on high leverage opportunities, while generously sharing their knowledge and experience.

Thank You and Looking Forward

While the biggest payoff was seeing Postgres operate reliably through peak holiday traffic, it was equally rewarding to work with great engineers, be well supported by leadership, and benefit from the accumulated scalability engineering practices injected into the codebase. A special thank you to Josh, Ronnie, and EJ.

For 2026 we’re forming plans to further improve Postgres reliability, scalability, and cost efficiency.

If these types of posts are interesting to you, please consider subscribing to my blog or buying my book. If you’re an engineer interested in working on these types of challenges, please get in touch.

Thanks for reading!

If you're interested in Ruby on Rails details for peak traffic on Christmas Day 2025, you may also enjoy How Aura Frames Scales For Peak Load with Ruby on Rails (#1 in App Store) .